Something fundamental is shifting in how humans and AI work together, and it’s moving fast enough to be genuinely disorienting. We’re at an inflection point. The transformation is real, it’s accelerating, and most people can feel it even if they can’t name it. I’ve been trying to find the right frame for it. The closest model I’ve found is the membrane.

Engineering has a long history of borrowing from biology and finding that the patterns hold. Neural networks. Genetic algorithms. Swarm intelligence. Hub-and-spoke topologies that mirror how cells cluster and communicate. We reach for nature’s blueprints because evolution has had a few billion years to optimize for complex systems. When something in nature rhymes with something in technology, it’s worth paying attention.

The membrane is one of those rhymes.

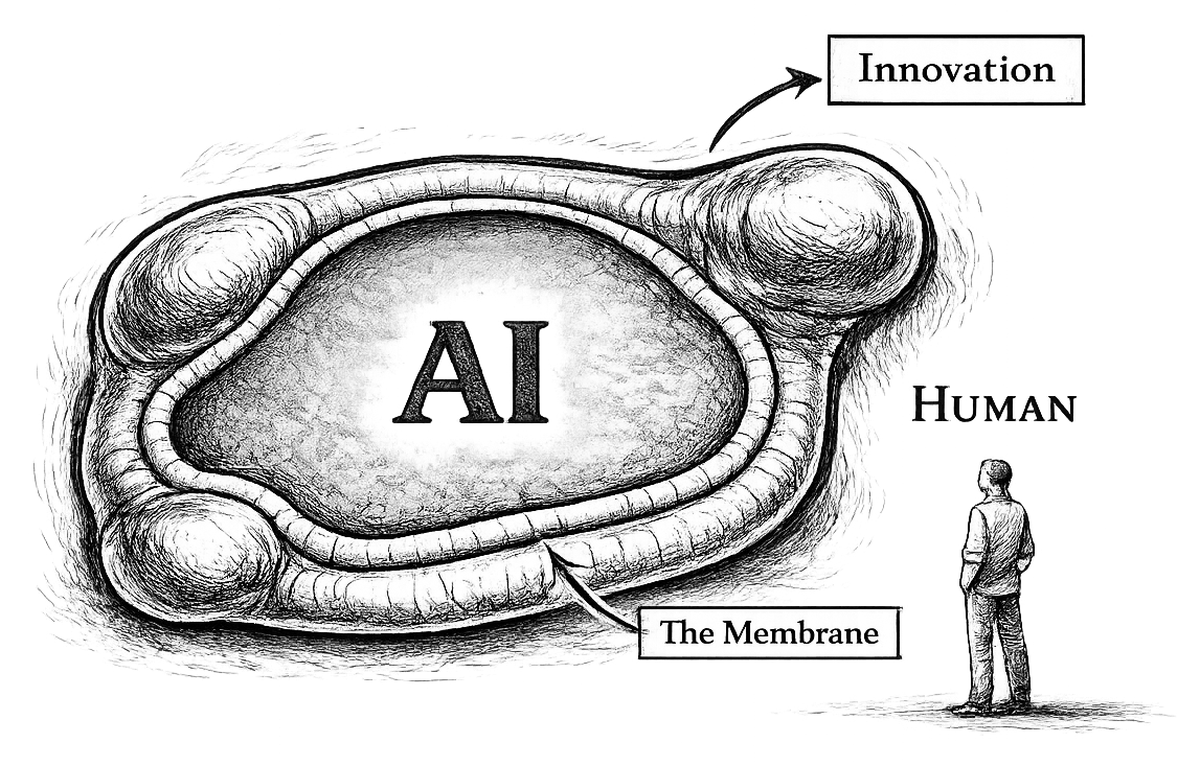

Conceptual model of the human–AI capability boundary.

The Cell

Picture a cell. Inside is everything AI and agents can handle autonomously: not just simple tasks, but work that requires intent, context, and multi-step reasoning. The boundary is further out than most people’s mental models have caught up to. Outside is everything that still requires a human: choosing which problems matter, navigating ambiguity where the data won’t give you a clean answer, and building the kind of trust that only comes from another person in the room.

The cell is growing. Fast.

METR’s research shows the length of tasks agents can complete autonomously has been doubling roughly every seven months, accelerating to every four months recently. Their benchmarks measure isolated tasks, so real work is messier. But the direction is what matters. The cell is expanding, and it’s not slowing down.

Stay on the Wall

If you’re doing work that’s inside the cell, you should be asking why you’re not delegating it. If you’re doing work that’s outside, you’re applying skills that agents can’t replicate — yet. But that boundary is shifting every few months.

The position of leverage is the membrane itself. The edge between what AI can do and what it can’t. That’s where you’re orchestrating agents, evaluating outputs, deciding what to delegate and what to own, and recalibrating as the boundary moves.

A concrete example: any time you find yourself copying and pasting information into or out of an agent, you’re doing work that’s inside the cell. If you can access the information, more than likely so can the agent with the right permissions (use caution here). The membrane work is wiring the agent to the source directly and deciding what to do with what comes back.

The skill isn’t any single task. The skill is reading the membrane: where is it thin, where is it about to stretch, what just crossed over, what’s stubbornly staying outside and why. That requires actually using the tools (you can’t read the membrane from a distance), staying current with what agents can do (which changes faster than most people realize), and being honest about which of your tasks have quietly moved inside.

The membrane doesn’t move once and settle. It moves continuously. You learn a skill today. Maybe it’s already inside the cell and you don’t know it yet. Maybe it’s outside today and inside in six months. The durable skill is navigating that evolution, not mastering either side of it.

The Surface Area Argument

Here’s the part I think most people miss.

When a cell grows, the volume increases. But so does the surface area. The membrane gets bigger.

The membrane is the human-AI interface. Every point where people and agents interact, hand off, evaluate, and direct. A larger cell means a larger membrane. More surface area. More points of interaction, not fewer.

As the cell grows, the tasks inside it change hands. But the boundary where humans engage with AI grows too. The work shifts from “human does the task” to “human guides, evaluates, and orchestrates.” The contact surface keeps expanding. There will be more human-AI interaction in the future, not less. It’ll just look different, and it’ll keep changing.

Getting Practical

We’re all trying to read a membrane that’s moving faster than we can track. Here are a few things that have helped me stay closer to the edge:

Use the tools daily. You cannot develop intuition for where the membrane sits by reading about AI. You have to use it. Every week I throw agents at something I assume they’ll fail at. Half the time, the boundary has moved since I last checked. That’s the calibration loop — you have to run it constantly or your model of the membrane drifts.

Audit your own work. Look at your last week honestly. How much of it was inside the cell? I started keeping a rough log: tasks I did myself versus tasks I delegated. The ratio shifts every few months, and noticing that shift is how I know where the membrane is stretching.

Watch for the stretch. When a new model drops or a new capability emerges, the membrane doesn’t move uniformly. It bulges in specific places. Pay attention to where the new pressure is concentrated. Those are the spots where your skills at the boundary matter most.

Get comfortable not knowing. The membrane will keep moving. The map you build today will be wrong in six months. That’s fine. The skill is the mapping, not the map.

Stay on the wall.